Impact Intelligence needed to replace slow, manual interview operations with a system that could run high-volume stakeholder conversations in multiple regions and languages. Their public product promise is clear: no scheduling bottlenecks, no manual transcription workflow, and an easier path from raw interviews to strategy decisions.

Under the hood, delivering that promise required more than one app. We designed and shipped a full interview operating system with a Rails management backend, a React interview experience, a FastAPI orchestration layer, a Cloud Run LLM service, and deep Retell integration for real-time voice calls.

The Core Problem: Manual Research Methods Do Not Scale

Traditional qualitative research breaks under volume. Teams spend most of their time coordinating logistics: setting interviews, sharing links, handling no-shows, collecting recordings, and cleaning transcripts. By the time findings are ready, the decision window has often passed.

Impact Intelligence needed a platform that could:

- Launch interviews on demand with secure, unique links

- Support multilingual voice interactions across dozens of language codes

- Track interview lifecycle states reliably from start to disconnect

- Store and export transcript artifacts for downstream analysis

- Give non-technical operators a reliable admin control plane

Architecture: Four Layers Working Together

- Management backend: Rails 7.1 + ActiveAdmin + PostgreSQL for application setup, role permissions, quota controls, link generation, and transcript exports.

- Interview frontend: React + Retell Web SDK for participant flows, real-time call state handling, multilingual UI, and controlled start/stop behavior.

- Orchestration API: FastAPI service that bridges frontend events and the Rails API, creates Retell web calls, and writes transcript payloads to cloud storage.

- LLM runtime: Python websocket service on Google Cloud Run that streams OpenAI-generated interviewer responses using bot-specific prompt context.

How Interview Bots Are Provisioned

Internal teams create a new interview application inside ActiveAdmin with interview context, audience profile, question set, language, and voice configuration. On creation, the platform deploys an isolated Cloud Run LLM endpoint, then creates a Retell agent mapped to that endpoint.

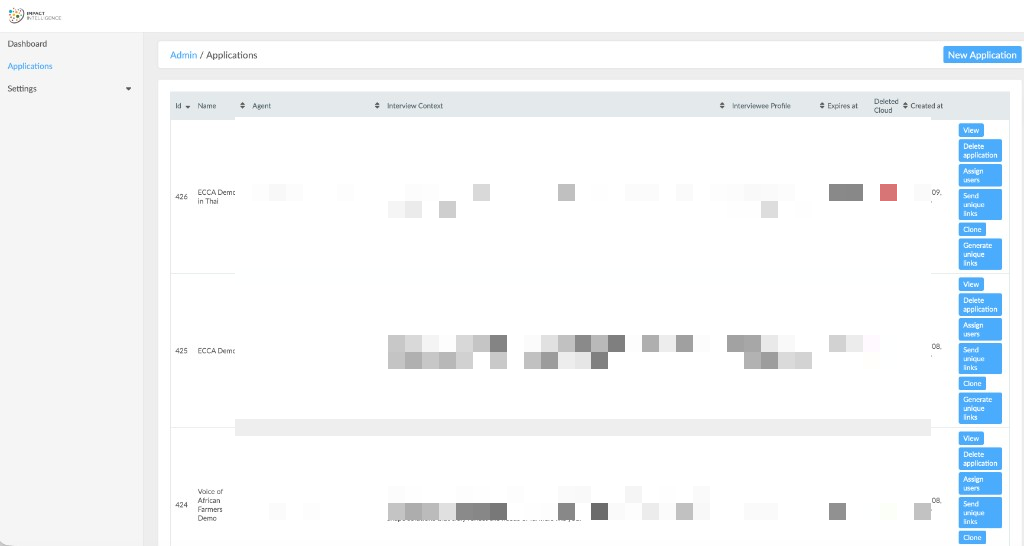

This gives every interview project its own runtime context while keeping operator setup in one admin flow. We also added clone support so teams can duplicate a working setup and launch new studies faster.

Secure Link and Access Workflow

Each participant receives a unique interview URL tied to an application slug and token. When a session starts, the API validates link ownership, expiry rules, and cloud runtime availability. It also checks Retell concurrency before allowing a new call session to begin.

This protects data quality and avoids over-capacity failures during peak interview windows.

Voice Session Reliability and Status Tracking

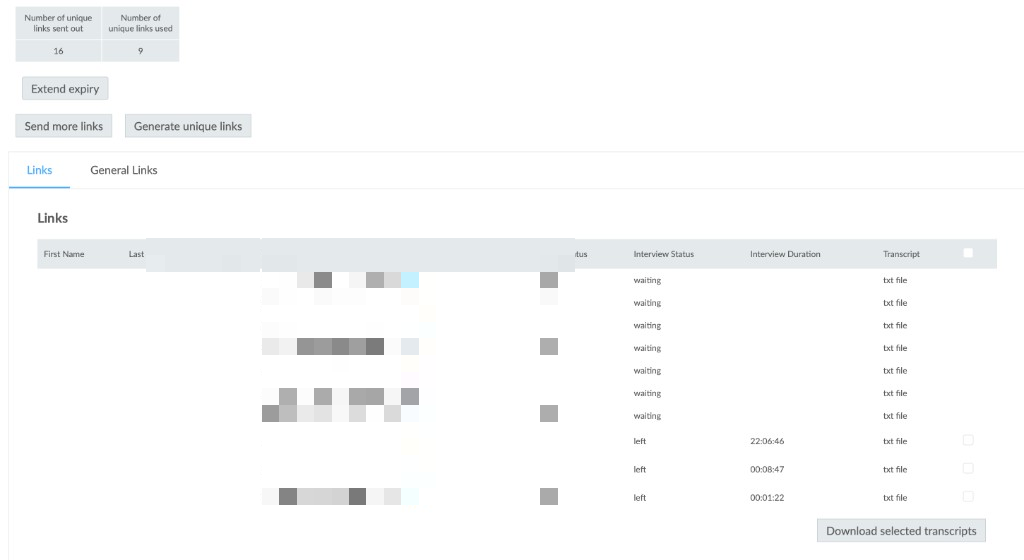

Reliable interview state was a major requirement. The platform tracks both user-visible lifecycle states (waiting, started, done, left, stopped) and Retell call telemetry through dedicated call tracking and call log models.

We implemented internal polling endpoints to reconcile final call outcomes, disconnection reasons, and durations. That gives operators dependable records even when network interruptions or abrupt exits happen mid-session.

Multilingual Experience End-to-End

The platform supports a broad language matrix in both UI and interviewer behavior. Language code is passed through routing, prompt composition, and opening/closing interview phrases, so participants can complete interviews in their selected language without forcing English fallback behavior.

Transcript and Insight Pipeline

At call completion or user exit, transcripts are captured through the Retell-integrated flow and written to cloud storage. Admin users can download selected transcript sets as zip bundles directly from the operations interface.

This closes the loop from interview execution to analysis handoff with minimal manual ops overhead.

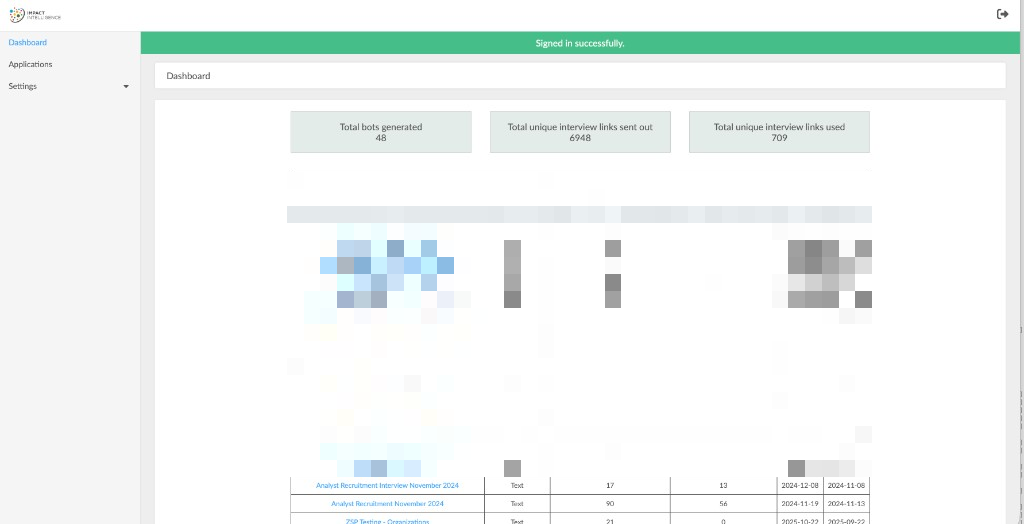

Operations Dashboard Screenshots (Sanitized)

These are real product screens from the project. Personal data and sensitive identifiers have been pixelated before publishing.

What Shipped

- Production Rails admin platform with role-based access and editor quotas

- Retell-powered web interview experience with secure start, stop, and completion flow

- Cloud Run deployed LLM services configured per interview application

- Multilingual interviewing support across major global language variants

- Concurrency-aware call admission checks and robust link expiry controls

- Call tracking and lifecycle reconciliation via internal polling workflows

- Transcript capture to cloud storage with batch export from admin

- Automated link generation and participant invite-ready operations

Business Outcome

The final platform gives Impact Intelligence a repeatable system for running confidential, multilingual interviews at scale, while reducing manual research coordination load for operators. It also gives leadership a cleaner path from raw interview conversations to structured decision input.

This is what production AI interview infrastructure looks like: reliable runtime orchestration, clear operational controls, and data pipelines that stay useful after the call ends.